- AI Software

- C3 AI Applications

- C3 AI Applications Overview

- C3 AI Anti-Money Laundering

- C3 AI Cash Management

- C3 AI CRM

- C3 AI Decision Advantage

- C3 AI Demand Forecasting

- C3 AI Energy Management

- C3 AI ESG

- C3 AI Intelligence Analysis

- C3 AI Inventory Optimization

- C3 AI Process Optimization

- C3 AI Production Schedule Optimization

- C3 AI Property Appraisal

- C3 AI Readiness

- C3 AI Reliability

- C3 AI Smart Lending

- C3 AI Sourcing Optimization

- C3 AI Supply Network Risk

- C3 AI Turnaround Optimization

- C3 AI Platform

- C3 Generative AI

- Get Started with a C3 AI Pilot

- Industries

- Customers

- Resources

- Generative AI

- Generative AI for Business

- C3 Generative AI: How Is It Unique?

- Reimagining the Enterprise with AI

- What To Consider When Using Generative AI

- Why Generative AI Is ‘Like the Internet Circa 1996’

- Can Generative AI’s Hallucination Problem be Overcome?

- Transforming Healthcare Operations with Generative AI

- Data Avalanche to Strategic Advantage: Generative AI in Supply Chains

- Supply Chains for a Dangerous World: ‘Flexible, Resilient, Powered by AI’

- LLMs Pose Major Security Risks, Serving As ‘Attack Vectors’

- C3 Generative AI: Getting the Most Out of Enterprise Data

- The Key to Generative AI Adoption: ‘Trusted, Reliable, Safe Answers’

- Generative AI in Healthcare: The Opportunity for Medical Device Manufacturers

- Generative AI in Healthcare: The End of Administrative Burdens for Workers

- Generative AI for the Department of Defense: The Power of Instant Insights

- What is Enterprise AI?

- Machine Learning

- Introduction

- What is Machine Learning?

- Tuning a Machine Learning Model

- Evaluating Model Performance

- Runtimes and Compute Requirements

- Selecting the Right AI/ML Problems

- Best Practices in Prototyping

- Best Practices in Ongoing Operations

- Building a Strong Team

- About the Author

- References

- Download eBook

- All Resources

- C3 AI Live

- Publications

- Customer Viewpoints

- Blog

- Glossary

- Developer Portal

- Generative AI

- News

- Company

- Contact Us

- Introduction

- What is Machine Learning?

- Tuning a Machine Learning Model

- Evaluating Model Performance

- Runtimes and Compute Requirements

- Selecting the Right AI/ML Problems

- Best Practices in Prototyping

- Problem Scope and Timeframes

- Cross-Functional Teams

- Getting Started by Visualizing Data

- Common Prototyping Problem – Information Leakage

- Common Prototyping Problem – Bias

- Pressure-Test Model Results by Visualizing Them

- Model the Impact to the Business Process

- Model Interpretability Is Critical to Driving Adoption

- Ensuring Algorithm Robustness

- Planning for Risk Reviews and Audits

- Best Practices in Ongoing Operations

- Building a Strong Team

- About the Author

- References

- Download e-Book

- Machine Learning Glossary

Best Practices in Prototyping

Ensuring Algorithm Robustness

Another factor to consider during AI/ML model prototyping is whether the model will be robust when deployed in production. Robustness involves thinking through whether the model pipelines prototyped will be able to robustly handle real-world data, including poor data quality, gaps or missing data, or potential adversarial attacks.

Model robustness is not entirely an algorithmic task. Business rules – for both pre-processing of data sets and post-processing after an algorithm is run – can be appropriate to ensure model robustness. However, robustness must be considered along the entire end-to-end pipeline – from data ingestion to model outputs, including the way the output will be used in the business process.

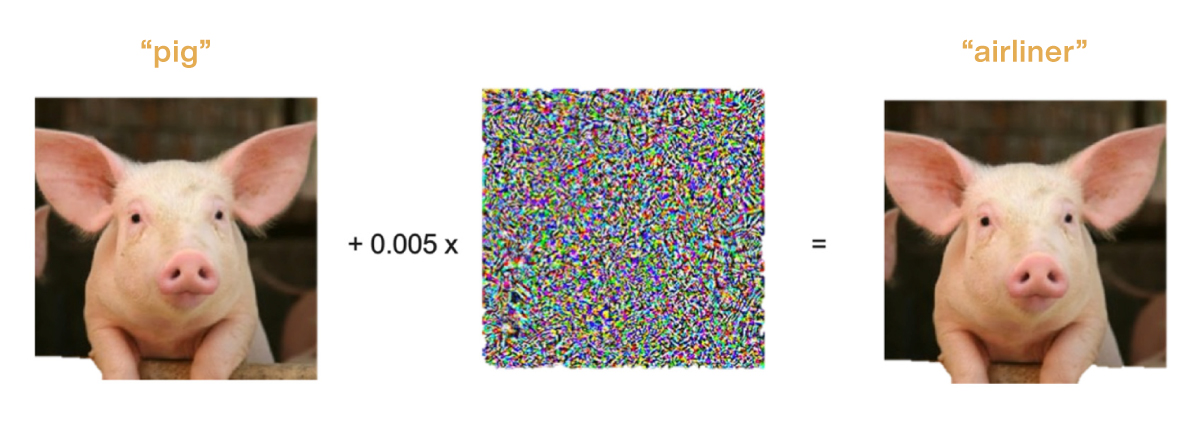

One example of the effect of a non-robust model appears in the following figure. In this example, a deep learning model was trained to label images of pigs. The original model successfully labeled the image on the left as a “pig.”

However, in this case, a small 0.05% noise in the original image – that could occur from an adversarial impact – leads to a dramatically different outcome. When the modified image was passed through the same deep learning model, a new prediction labeled the image as an “airliner.”

Figure 36: Small changes that are imperceptible to the human eye – like applying 0.05% noise to an image – can drastically change the predicted output.

One way of ensuring the robustness of deep learning models such as this involves injecting additional noisy or potentially adversarial data as part of the model training process, allowing the model to “learn” how to make predictions in the presence of noise or an adversarial attack. Other techniques may involve the use of generative adversarial networks (GANs). But there usually is a tradeoff between the robustness of the model and its performance.